Although many contenders are vying to become the best game developer, there aren’t that many worthy candidates for the spot. Among the few development houses that do fit the bill, people often speak highly of Naughty Dog for its multiple GOTY awards and deep, engaging stories.

Being one of the best partners of PlayStation, Naughty Dog is renowned for creating gaming experiences on par with cinema. Actually, we as gamers tend to take game story design for granted, without realizing the finer details and effort that goes on behind the scenes. So then how does Naughty Dog tap into our emotional side and encourage the spirit of exploration? In other words, what makes their games special?

Ryan M. James, the lead editor for Naughty Dog, took to the stage to talk about how the company makes realistic performances in games. From the title of the session, I could sense that people in the company think like designers. Below is the gist of the session.

*This article has been written in the perspective of the speaker for readability reasons.

I’ve worked at Naughty Dog as an editor for seven years. My role is to help the team, including the actors, to perform optimally. Knowing what makes realistic and excellent performance is the key in making in-game performances perfect. This involves various elements, pertaining not only to actors but everything from writing and visuals to audio and camera effects.

At Naughty Dog, we aim to deliver dynamic cinema-like experiences through gaming. Without being disrupted by cutscenes, emotional moments will continuously hit home in seamless zones. We have experts who control every aspect of character background and other intricate details, and our job is to encourage them to reach higher through self-assessment and introspection.

Here’s a thought; what makes a realistic performance? Actually, this is not a simple task. The character’s actions and words, as well as visual elements, serve as the foundation for making a realistic performance. We have to design gameplay in a way so that cutscenes and other elements dynamically work together to drive players to feel invested in the characters.

Game development entails constant changes. Once one part of the game is finished, it’s handed off to the editing team. We’re dedicated to pursue fresh and good changes in these moments.

Let’s take a look at the first step in development. First thing is pre-production. We make a significant amount of scenarios and storyboards to help actors assimilate to the world and to help them form their performances. This process helps them to visualize the flow of gameplay.

One important thing is that cutscenes always have to precede gameplay. We create all cutscenes through motion capture technology, but motion capture has many underlying caveats. To avoid having actors perform in a vacuum, we have to set up the environment with other actors and necessary interactions. Motion capture takes proper preparation to meet the special environment.

Prior to motion capture, the team will read over the script and to exchange feedback. Sometimes we make immediate changes on site after collecting comments from actors and editors. During pre-production, we record everything on motion capture from various angles all the way to sound. In turn, the recording becomes the master film, serving as the foundation of cutscenes.

In this stage, the editor’s job is to be faithful to the director’s original intent. It’s my duty to apply the director’s instructions, emphasis, and details. If something changes from the design, then I document those changes and reflect them on to the script.

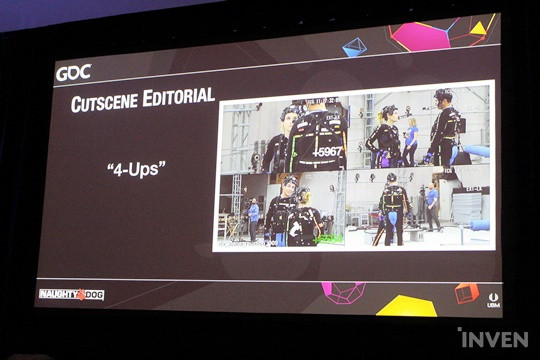

Another rule is to ground the motion capture-created output on what we call 4-Ups. We create and compare a total of four shots: one final output, two motion captures of each character’s face, and one motion capture of the whole scene. By going through 4-Ups, we have virtually infinite freedom in making cutscenes such as recording with a camera, rendering face and skin, and fiddling with audio files. We can use every part of the final output without being limited to a few camera angles.

There’s something called Picture Lock in the industry, which means to “lock” the finished cutscenes without making any additional changes. We prefer to use Picture Latch, in which we fix it as the finished product but make minor changes. Our goal is to make players believe that cutscenes are taking place in seamless spaces with continuity intact by consistently mending the animation and models. Cutscenes and gameplay have to flow naturally.

Next up is a technique we call In Game Cinema, which works inside gameplay. It’s both unnatural and impossible to drive narrative with cutscenes only. That’s where IGC comes in to fill in the gaps between gameplay and cutscenes by serving as mini cinematic cutscenes.

Audio is paramount in IGC, but adjusting audio is its own challenge. One issue is that a player-controlled camera takes on the role of a listener. At the same time, the camera serves as a microphone, listening to the character, and a speaker, telling players what to do. As a result, we have to optimize audio to sync with various conditions like distance, direction, and other expansions.

Of course, that’s not all. Let’s take this gameplay scene for example where Nathan confronts his rival and the enemy mercenaries while exploring the ruins. We can see from here the conveyor belt of storytelling. In this 5-minute scene, there are numerous story elements including IGC, cutscenes, and dialogue that drive the story.

What I’d like to emphasize is the use of dialogue in the game, which fills the gaps between cutscenes and brings life to characters. All spoken words that happen in gameplay serve this purpose.

Many voice recordings for IGC take place independently of motion capture. Separate scripts are made for the occasion and are important aspects of gameplay. For instance, in-game lines remind players of important objectives, goals, and interactions. In short, these features drive and aid the game progression.

All dialogues have a purpose and a right moment, as all heroes have allies who give them a lending hand. Friendly NPCs are designed to make simple remarks like “it’s this way!” or “look here!” These lines imply the same message, but similar expressions are grouped together to be used at random.

We indicate timing for continuing each line on script such as the order of dialogue for each character as well as the information on what line comes before and after. These work as a kind of a guideline. We also have to consider people’s playstyle in designing and timing these lines.

Some players closely follow all story dialogue, whereas others simply skip them in order to get to gameplay faster. As such, helpful dialogue must quickly present itself to help players to find their bearings. The timing of when a certain line is said is crucial for this reason.

When spoken words aren’t enough to deliver a more complete experience, we add gestures. A few special AI models and the main protagonist may have their own gestures. These gestures are effective in conveying character intentions and emotional states. Since players are situated away from the back of the character, gestures can better indicate facial expressions and emotions.

Next, I’d like to talk about systemic dialogue such as friendly AI reacting to an enemy by saying, “here’s an enemy!” This kind of dialogue streamlines game progression and encourages players to decide on the next course of action. There are different versions of the same message to be played at random. Since interacting with the enemy AI brings more realism, enemy dialogue is also important. The main protagonist, enemy, and multiplayer characters all use these systemic dialogues.

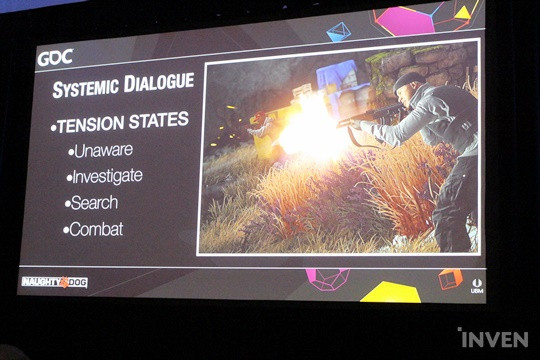

The enemy’s systemic dialogue changes according to the enemy state, which indicates how interested an enemy is in the player. The first state is when the enemy is unaware of the player. In this state, enemy characters naturally talk amongst themselves while the player and friendly NPCs whisper to one another.

Next is the investigative state, in which an enemy searches the area, yells, and calls for help. At this point, players will feel threatened and must decide whether to fight or flee. Last is the search state, in which an enemy actively seeks to find the player, fully aware of his or her presence. Allies in this state proactively try to help the protagonist by shouting the location of the enemy.

In this last combat state, everyone is rowdy, heightening the combat reflexes. Friendly AI gives valuable advice depending on the enemy type. On the other hand, the enemy uses dialogue to give out hints whenever they’re about to attack the player with something he or she hasn’t seen before, such as ambushing, new types of weapon, and attack patterns. Friendly characters also give ample tips on how to neutralize new encounters. The protagonist’s dialogue should be the reflection of the player emotions.

Ultimately, these dialogues are compartmentalized into basic modules like subject, verb, and object in order to create natural-sounding dialogue depending on the context.

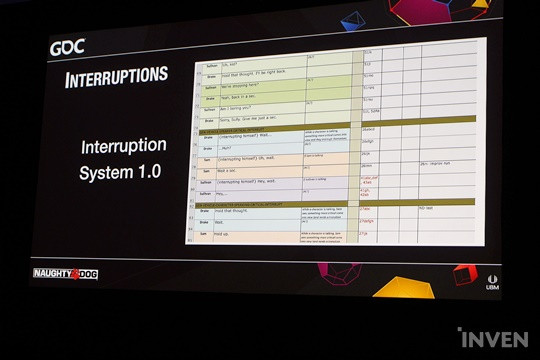

Another thing to keep in mind is that systemic dialogue has to be constantly interrupted and naturally resumed. In order to create a natural flow of dialogue when an enemy’s dialogue is interrupted by the player’s shooting, a few more adjustments have to be made. Building on the three principle elements – screams, onomatopoeia, and interjection - we created a system that incorporates appropriate speaking tone, order of important dialogue, and resume points to continue the dialogue after an interruption.

After all is done and finished, the polish phase is where the real work takes place.

In this phase, every element in the game has to be checked for final context. Everything from the character pose and expression to dialogue and action has to reflect the intended emotion and mood in a natural, persuasive fashion. Actions and words have to be in line with the context and continuity. Our constant challenge is to distinguish between things that need to be changed and those that need to remain the same.

Then comes localization, which is a demanding task on its one. Since all storytelling material like dialogue and cutscenes are closely connected to each other, localizing to another language is a tremendously arduous feat. This is compounded by the fact that localization schedules have always been very tight. Localization takes place within the small window between the end of development and the launch date. The process is hectic, to say the least.

We have to handpick which dialogue will be played, review how to drive gameplay, and devise audio and script from scratch, which all takes human editing effort. Meanwhile, every change has to be documented and controlled.

The biggest hurdle of all is time. We always don’t have much of it and have to work under the constraints of time.

“We do all crazy shit.”

As a team, we have given our best in our own departments. I hope you have fun playing our games.

Sort by:

Comments :0